Some years ago, Bain & Company published a report titled, “

Closing the Delivery Gap: How to Achieve True Customer-Led Growth.” Out of that report came what has come to be known as the 80/8 statistic: “80% of organizations believed they delivered a ‘superior experience’ to their customers; however, only 8% of customers felt the same way,” Bain wrote in that report.

While the 80/8 stat is old, the lesson it teaches persists. We can all ask the question, “How do our customers really feel about the customer service we give them?”

I recently had one of the worst customer service experiences I’ve ever had; it was with a merchant services organization (graphic below courtesy of

Vonage).

I was trying to access my account online, but the system wouldn’t let me. It said my password had expired and that my account had been disabled. After two calls to the company’s support line and finally being told to make a third call to IT support, a recorded message said to leave a message and the IT people would get back to me. They finally did, but it took more than two hours to resolve what should have been a simple, two-minute fix. In fact, this could have easily been handled through a simple website password reset feature or through a bot fronting the call center. I suppose the execs in this company believe they’re offering great customer service, but as the customer, I don’t. It appears the 80/8 stat may still accurately describe some customer service experiences of today.

As organizations continue to adapt and refine how to delight their customers, and as they modify their own internal practices, using intelligent virtual agents or bots will likely be part of these efforts. I was a bit surprised while reading a

recent No Jitter article written by an industry colleague who said that " most chatbots don’t work.” A more accurate statement, I think, is that “most

poorly designed chatbots don’t work.” I’m aware of many, many well-designed bots that are working fine, providing value to millions of people and the companies that have launched them. Although this bot-promoting Decoding Dialogflow series focuses on Google Dialogflow, Google Contact Center AI (CCAI), and the Dialogflow partner ecosystem, I’m purposefully including some good design principles that apply universally, in nearly any intelligent bot project.

Mining Your Organization for Data

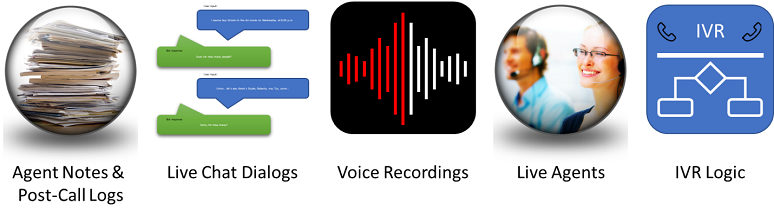

AI projects need data, and those associated with chatbots are no exception. The good news is that data for creating intelligent bots is plentiful in a well-run contact center. The bad news is mining the data sources isn’t always easy. In the graphic below, I reference five different locations where data relating to customer intents and the accompanying entities can be found. This isn’t an all-inclusive list: Your organization will likely have all of these I show, but it may also have others as well.

Which of these is the most productive to mine when seeking the data necessary to build a chatbot? Here’s my take:

1. Agent Notes & Post-Call Logs

- Unless the company has been rigorous in its approach to creating post call logs and customer notes, these would be difficult for an automated tool to go through and extract needed data for a chatbot.

- However, in cases where contact centers do use some rigor, one way to identify the most important customer inquiries, and hence “intents,” is to examine the “tags” agents have assigned to their post-call logs.

- Call logs are likely to be inconsistent and somewhat messy.

- Another downside is that these notes won’t contain customer utterances, so while they may help in identifying intents, they won’t provide the customer phrases needed to train Dialogflow.

- These dialogs presently are the optimal source for chatbot data because they include actual customer expressions indicating intents and entities.

- Using the Dialogflow training function, a bot developer can import these phrases from chat logs. Following the import, the developer can look to see which phrases match intents and which don’t. In addition, developers can manually assign phrases to the correct intents.

3. Voice Recordings

- You’d think that voice recordings should be a mecca for customer data. But hairballs come with the use of voice recordings.

- In order to process voice recordings, a speech-to-text natural language processing (NLP) engine would need to convert the call logs to text. Then the transcript could be fed into a training engine. There are two significant problems, however. The first is the quality of the speech-to-text engine -- you need high-quality transcripts to get good results. Google presently recommends that humans transcribe the audio data to get good transcripts. The second issue is separating agent speech from customer speech. Some contact centers record agent and customer voice on different channels. Separating the agent’s voice from the customer’s voice clarifies which text comes from the customer and which is from the agent.

- Tools that can use voice and chat transcripts to extract data are becoming available. Google has indicated it can do a pretty good job of identifying intents from large data repositories if the speech-to-text is highly accurate. Also, Google is working on a CCAI tool called Conversation Topic Modeler, which can examine text streams for intents and entities. Conversation Topic Modeler is currently in alpha; Google has indicated it is a top development priority and that we should look for some announcements on this product later in the year. (As an aside, Nuance announced a similar capability, called Pathfinder, which should be available sometime this summer.)

- Live agents have real-world experience in the contact center responding to a variety of customer service requests.

- Extracting data from live agents is likely to be a time-consuming and demanding process. It may need some sort of trained facilitator or at the least a structured approach.

- Google reports that using live agents to identify intents does work, but that it rarely sees it in practice from Dialogflow customers who have created chatbots. Most customers collect data from agent conversations rather than interviewing the agents themselves because there’s typically such a large amount of readily-available data.

- The IVR system contains a lot of business logic and routing information. It’ll typically have many customer intents built into it. This can be a first step to identifying intents.

- What the IVR system lacks is the precise phrasing customers will use to identify the intent for which they’re calling. So, while IVR systems are good data sources, bot developers still need transcripts of real customer phrasing, typed or spoken, that identifies intents.

Building an Intent Library

As you build up your chatbot’s capabilities, it’s important to create an intent library that will scale with your bot’s abilities. This is similar to how many software projects require that functions, objects, and variables have naming conventions. Intent categorization and names must be created in such a way that multiple AI trainers will categorize similar expressions consistently to the same intent.

Teaching Dialogflow Your Unique Lexicon

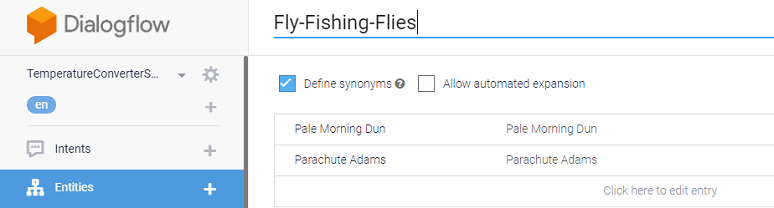

Many organizations will use particular words or phrases that the chatbot will need to process correctly. These may be words that are unique to an industry, or they may be words that are used in a peculiar way.

My current favorite example is a fly-fishing chatbot for purchasing flies -- the bot would need to know the names of certain fly patterns, such as “Pale Morning Dun” or “Parachute Adams.” These words wouldn’t make sense in other contexts, but they and many others like them are the language of fly fishermen.

Likewise, many specialties will have their own lexicons, and Dialogflow needs to understand them.

Interestingly, the way to specify a lexicon to Dialogflow is in the entities associated with an intent and in the words used in the training phrases for identifying intent.

In the fly-fishing sales bot example, I would first create an entity called Fly-Fishing-Flies in Dialogflow. Within that entity, I could then define different types of flies, including the Pale Morning Dun and the Parachute Adams.

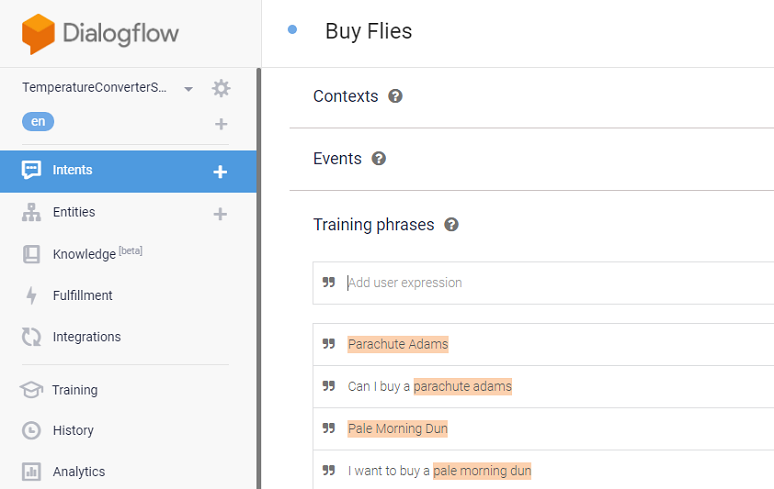

Once I’ve prepared my specific lexicon within a Dialogflow entity, I would create a “Buy Flies” intent that would use this entity. Provided with training phrases containing these special words, Dialogflow automatically detects the fly types, highlighted as entities in the graphic below, from within the training phrase.

Perhaps I’ve been a bit geeky and maybe a bit too technical here, but the point is that teaching Dialogflow a specific lexicon is straightforward, particularly for chat-based bots. For speech-enabled bots, Dialogflow supports “speech biasing,” which is a method for helping the speech-to-text engine recognize unusual words, phrases, or word patterns, like the odd names for my fly-fishing flies.

In Summary

- You need customer data to train your chatbot.

- Live chat dialogs are currently one of the best sources of training data.

- Call recordings, while rich in data, are hard to mine right now, although developments are underway to enable automating the mining of data from these recordings.

- You need to teach your chatbot terms and phrases that are unique to your business or industry.

What’s Next

The next article in this series will appear in early August focusing on how you actually create intents and inputs training phrases for use in Dialogflow-based chatbots.