As a delegate, I recently participated in

Networking Field Day 25, an event that unites thought leaders and companies within the networking space to discuss advancing technology and core issues within enterprise IT. Three companies: VMware, PathSolutions, and Kemp presented network management products—a technology that I track. In this article, I’ll describe each company’s products, how they fit into the market, what I like about them, and future enhancements they could make.

VMware: vRealize Network Insight

At

NFD16 back in 2017, a small startup called Veriflow presented a network verification product that uses mathematical validation of the network. It’s an interesting approach that occasionally appears in academic discussions, i.e.—can networks be mathematically analyzed to prove whether connectivity between sets of endpoints exists or is denied. This type of analysis is fundamental for effective network security. Technical debt, often in the form of old firewall rules or forgotten network links, can poke holes in an otherwise good network security design.

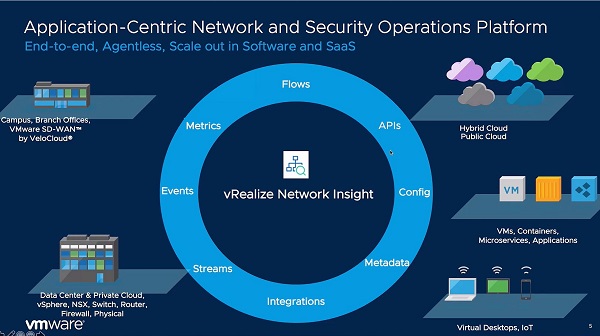

VMware liked the technology enough to acquire Veriflow and is calling it vRealize Network Insight (vRNI). It takes the approach of examining the network’s operational state and ensures it matches the intended state. In other words, it creates a digital twin that uses the configurations and operational state of the real network to create a virtual replica for the analysis. I should note here that this digital twin can’t be used to test automation processes. It’s most useful for evaluating connectivity changes when a certain state is changed, like simulating a link failure or adding new links.

vRNI’s analysis is particularly useful for analyzing modern networks where services exist in the public cloud and the users of those services are located anywhere. The use of traffic overlays that are prevalent in software-defined networking (SD-WAN, SD Data Center, and

SD-Branch) help drive the need for such analysis tools. The figure below depicts the breadth of data sources that significantly improves the IT team’s ability to diagnose what’s happening with applications both in terms of security and performance.

But does it work? A major healthcare provider is successfully using it in production across their infrastructure. I’d say that’s a pretty compelling example.

I liked that their demonstrations showed 149 applications because most are based on toy networks that don’t demonstrate a product’s ability to scale up to handle modern networks. They have customers with 9,000 applications, and it’s going to be interesting to see where VMware goes with vRNI. This type of network analysis is new and very powerful. I’d like the opportunity to use it in a production network. It’s certainly worth a look.

PathSolutions: TotalView

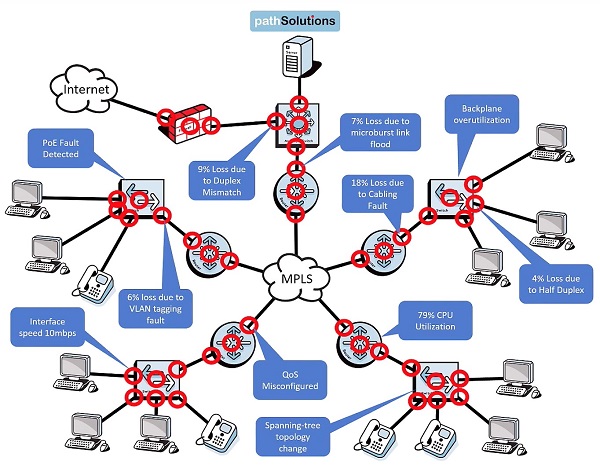

Tim Titus, PathSolutions founder and CTO, presented TotalView as a panelist at Enterprise Connect, at

NFD20, and now at

NFD25. TotalView provides good network visibility into network functionality and identifies common problems. The implementation is based on a single server that automatically discovers what’s on the network, based on the address ranges you provide. It uses simple network management protocol to query the network devices for a concise set of data that drives its expert analysis. The graphic below shows some of the common problems such as Quality of service misconfigured, spanning-tree topology change, duplex mismatch, high processor utilization, and cabling faults.

A recent addition to the capabilities is a client-based agent that collects local network information like Wi-Fi characteristics (signal strength, channel ID, retransmissions, signal-to-noise ratio) and identifies impediments to good work-from-home connectivity.

I like the focus on creating an affordable, easy-to-use system that aids medium-sized organizations with limited network staff. Just be aware that it’s likely to find many issues to address upon initial implementation. I recommend prioritizing the findings and resolving a few each day. You’ll soon find that the network, and the applications that use it, will experience fewer problems.

Kemp: Flowmon

At

NFD16 Kemp presented application delivery fabric and application migration, at

NFD20 they described load balancing and application delivery controller, and now, at NFD25, they covered network performance monitoring, and diagnostics. The basis of Kemp’s performance monitoring is the collection of flow data: NetFlow, Internet Protocol Flow Information Export (IPFIX), sampled flow (sFlow), or flow data from its Flowmon collectors. Flowmon can be deployed as either hardware or software probes. The software versions are useful for deployment into cloud environments.

Flowmon’s Network Performance Monitoring and Diagnostics (NPMD) uses a set of heuristics to analyze collected flow data to detect security events (like failed login attempts from unexpected sources), application performance insights, and symptoms of network problems like packet loss and high latency. It's particularly useful when determining what application flows are using each path segment when packet loss or high latency is detected.

Analyzing flow data has three advantages:

- Flow data volume is much smaller, by factors of between 250:1and 500:1

- Multi-vendor support for exporting standard flow data

- Focus on Layer 3/Layer 4 packet header data and flow size, including the contents of VXLAN tunnels

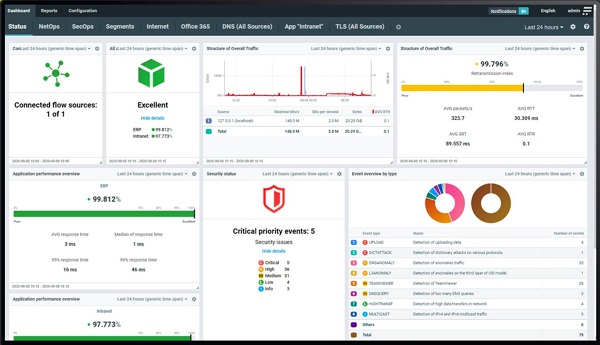

Flowmon can also perform full packet capture (see image below), which can be used for detailed analysis with tools like Wireshark. Just be aware that most application flows are encrypted, obscuring the packet payload.

Collecting flow data is one thing, but analyzing it is another. Kemp’s analysis uses expert-level heuristics to detect deviations from expected network behavior and translate it into findings that are reported via its comprehensive dashboard, seen below. You can easily drill down into any of the dashboard areas to investigate the details.

If you’re already one of Kemp’s 100,000 worldwide customers, using their Flowmon system makes a lot of sense. I’ve seen flow analysis systems quickly identify application timing details indicating that a cloud application provider was experiencing significant server delays. In one case, a software as a service (SaaS) provider’s application slowness was blamed on the network, which is a common occurrence. The network team quickly analyzed the application flow data and identified that server delays were the source of the slowness. The SaaS vendor was contacted and admitted a problem in the data center was causing the slowness. The ticket was resolved within fifteen minutes.

The Future of Network Management

We’re starting to see more analytics of network data at levels that weren’t possible 10-15 years ago, due to limitations that no longer exist in computing, memory, storage, and algorithms. New approaches to network management promise to help us detect and resolve network problems. But at the same time, these new platforms can challenge us to adopt new processes and learn new things. It’s certainly an interesting and evolving field.