4. Most people have difficulty thinking beyond their highly informed context

When designing conversational applications, developers often build the solution with a set of pre-existing expectations. In order words, they expect users to say specific things in specific ways and to have a set of specific problems. In practice, users often say things in ways that the designer didn’t or couldn’t predict, so make sure that you include people in the design process with a variety of contexts and opinions. You’ll then want to make sure that you tune the application using real-world utterances from real callers. Remember that these are learning systems and that you need to let them learn.

5. Be careful in very proprietary voice domains

When using a streaming speech API, like Google’s, keep in mind that they have been trained not on your data, but on everyone’s data. What’s important to you may not be important to others. This can often lead to the speech recognition engine misinterpreting what the user said.

There are a couple of good solutions to this problem: 1) You could switch from open speech to a closed grammar (a good virtual agent platform will allow you to do this). The closed grammar will offer a domain-specific list of items to match against. For example, the caller might say “I need toner for my MFP M477fnw printer.” The closed grammar will ensure at that point in the dialog that the recognizer is expecting one of a domain-specific list of responses. 2) You can also now use

Phrase Hints, which is supported by Google as well as some virtual agent platforms to greatly improve the likelihood that your proprietary vocabulary works reliably.

6. Allow the user to transfer… with context

Don’t lock your users inside of a virtual agent. Make sure they have the option to connect to a live agent. Many systems also allow you to detect anger and other emotions from the user as he or she speaks to the virtual agent. If this happens, get them to a live agent and make sure to provide your live agent contextual background of the user’s conversation with the automated system so they can tactfully diffuse the situation. In general, you should always make sure that when a conversation passes to a human, you give that person the benefit of understanding the previous conversation.

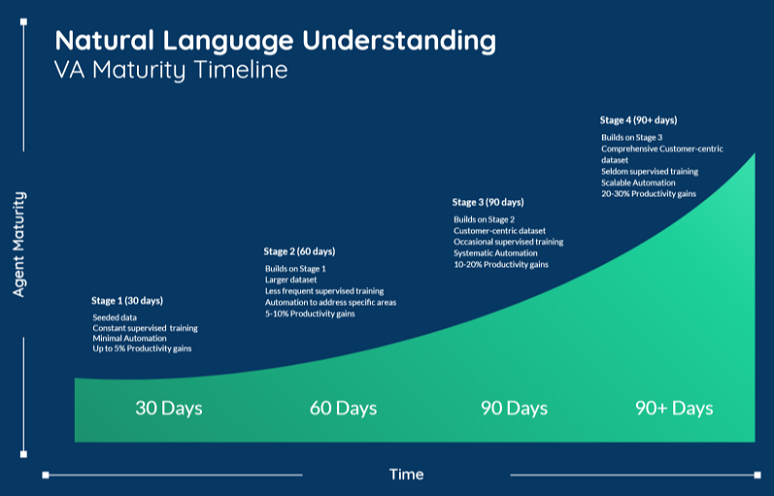

7. With natural language, deployment is not the end

Remember that a virtual agent that uses a natural language processing (NLP) engine like Dialogflow is not an IVR. It’s designed to learn and mature over time. Make sure that you plan for this. We typically see deployments follow a common maturity path:

- 30 days – During the first stage, you’ll invest in the foundations of natural language understanding (NLU). This involves providing relevant data and constant supervised training.

- 60 days – At this point, you’ll have established your training processes and determined what data you’ll need going forward to improve your NLU capabilities.

- 90 days – This is a typical “crossover” point where the system begins to deliver real value in terms of NLU for your customers. This is the point where ROI becomes measurable.

- 90+ days – NLU automation becomes scalable outside of the initial scope of the project.

Conclusion

Google Contact Center AI is becoming widely adopted by both vendors and enterprises as a solution to assist live agents and improve self-service for customers. The question now for most enterprises is how to build and deploy solutions that harness its power with the least amount of effort, while providing the greatest return on investment. NLU and

conversational AI are driving the next wave of call center innovation, and we’ll continue to deliver the tools necessary to take full advantage of these emerging technologies.